When AI Exposes the Cracks

[Un]Churned Chapter 006

I spent a lot of time in the air and on the air this week. Between flights, podcast recording sessions, and catching up on my newsfeed, a few topics really stood out to me.

Here’s what’s been on my mind this week.

(This Week’s [Un]Churned 🎙️)

Episode 164: Why Your Post-Sales Budget Should Be 7% of Revenue (Not 10%) ft. Abbas Haider Ali

I had Abbas Haider Ali, SVP of Customer Success at GitHub, on [Un]Churned this week to talk about post-sales investment, proving ROI, and where AI actually fits into all of it once the hype fades.

Abbas made a distinction that really stuck with me. Customer Success isn’t underfunded nearly as often as it’s misallocated. When teams default to adding headcount or inflating coverage models without tightening ownership and outcomes, spend goes up but impact doesn’t. His case for anchoring post-sales investment closer to 7% of revenue anchors on discipline and intent.

We dug into what breaks when CS budgets drift and how to think about efficiency without devaluing the work. If you’re trying to defend CS investment, rethink your operating model, or prepare your team for an AI-shaped future, this episode is a practical place to start.

Stay tuned for Abbas’ complete playbook. He’ll share thoughts and tactics around resourcing, AI leverage, and building a defensible post-sales model that holds up under scrutiny.

Horizontal SaaS Meets the CS Reality Check

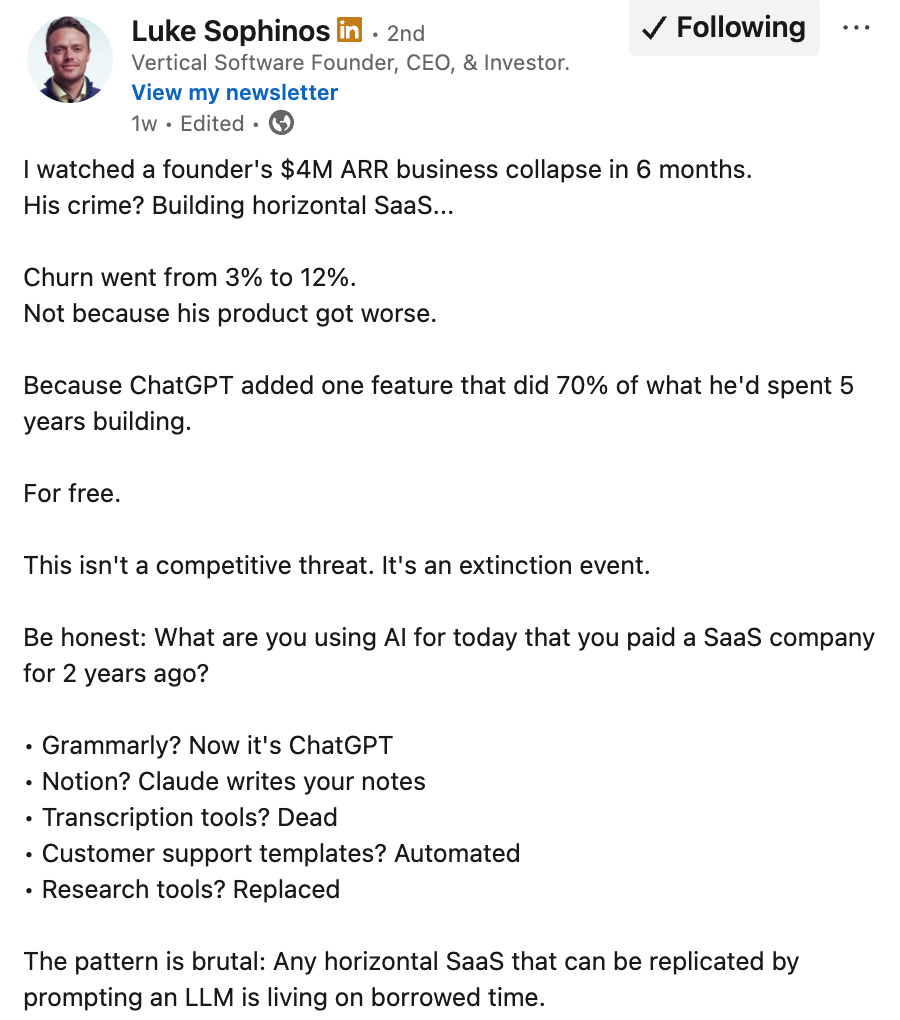

This week, Luke Sophinos laid out a blunt argument in his newsletter: horizontal SaaS is heading toward an extinction event as AI agents take over more generic workflows.

What survives won’t be tools built for everyone, it’ll be systems that own something hard—deep context, proprietary data, durable workflows, and real accountability. This isn’t about interfaces going away. It’s about where value actually lives once execution becomes automated.

From a Customer Success perspective, this hits close to home. The platforms and teams that win will go beyond surfacing insights to actually helping people act, decide, and prove outcomes. Generic tooling and shallow signals won’t hold up for long. As the bar rises, CS leaders will feel that pressure early (if not already).

The Claude Constitution (Through a Customer Success Lens)

I spent some time this week reading Anthropic’s Claude Constitution, and while it’s positioned as a framework for building safer, more aligned AI systems, I couldn’t help but read it through a Customer Success lens. As Anthropic describes it, the Constitution defines the kind of entity Claude is meant to be and the values that should shape its behavior.

That framing feels increasingly relevant for Customer Success. Training AI agents isn’t just about automation or efficiency. It’s about judgment. When an agent flags risk, recommends action, or moderates a customer interaction, it’s making decisions that affect trust. Those decisions need context, boundaries, and an understanding of consequences, not just data.

As AI agents take on more responsibility in post-sales, this is the lens CS leaders should aim to use. Agents should act in ways that are explainable, defensible, and aligned with real customer relationships. Values and guardrails can’t be layered on after the fact. They have to be part of how agents are trained from the start.

That’s what makes the Claude Constitution a useful reference point. Not as a policy document, but as a reminder that in Customer Success, alignment isn’t optional, it’s foundational.

Wrapping Up

Automation has a way of removing the excuses. As AI starts doing more of the execution, only the elements of a business built on judgment, clarity, and accountability really hold up. Bigger teams and louder tools stop being the answer pretty quickly.

The more interesting question is what the work looks like once the training wheels come off. How are you designing it? I’d love to hear.

See you next Monday 🧠

🌱 Josh

SVP, Strategy & Market Development @ Gainsight

👋 Connect with me on LinkedIn

🎧 Listen to more episodes of [Un]Churned

![[Un]Churned by Gainsight](https://substackcdn.com/image/fetch/$s_!7AoO!,w_40,h_40,c_fill,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Ffe2167ac-0bcf-4575-9712-8d5ef3588851_300x300.png)

![[Un]Churned by Gainsight](https://substackcdn.com/image/fetch/$s_!hKlf!,e_trim:10:white/e_trim:10:transparent/h_72,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fe14b36dd-52b9-48a3-9f93-3f6a459d55ff_1344x256.png)

![[Un]Churned's avatar](https://substackcdn.com/image/fetch/$s_!vkJ0!,w_36,h_36,c_fill,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F0464ad30-26c2-4f32-b429-ae4283dd5586_200x200.png)

It's interesting how you framed the CS budget issue as misalocation rather than underfunding; this nuanced perspective is incredibly valuable for strategic thinking.